FreeSync 2 vs G-SYNC HDR: What is the difference between these technologies?

Variable refresh rate technology has become a huge boon to gamers since its introduction a few years ago. Finally a technology that could help eliminate the troublesome advent of screen tearing. V-Sync has long since been an option in PC games but at the cost of latency and needing to keep a steady frame rate.

The likes of FreeSync and G-SYNC combat screen tearing by matching the refresh rate to the frame rate, whereas normal screens can only keep to one refresh rate at a time. These technologies have an operating range, though this varies from monitor to monitor, some can do 20-144Hz, others can do 40-60Hz and everywhere inbetween. These technologies also remove the input Variable refresh monitors aren’t even hugely expensive anymore, especially those with FreeSync, they can easily be had for under $150.

The introduction of variable refresh rate monitors has seen the interest in 120Hz and higher panels sky rocket. You no longer need to constantly hit 120 frames per second in order to maintain good visual quality, lowering the barrier to entry for high refresh rate monitors. We’ve recently seen the likes of ASUS announce 240Hz monitors, something that isn’t really possible for any current system to hit in terms of framerate.

Recently, both AMD and NVIDIA have announced their successors to FreeSync and G-SYNC, and the big addition from both parties is HDR. As 4K TVs and monitors become standard as has HDR, though proper 10bit HDR 4K TVs are still very expensive, at least for mainstream markets.

G-SYNC HDR

Starting with NVIDIA’s aptly named G-SYNC HDR, there hasn’t been much information on what their new technology has to offer, with much of the information pertaining to certain new monitors that are being released with their technology.

The new standard will be accompanied by 4K 144Hz monitors capable of HDR, almost the holy grail in terms of monitor technologies. The HDR is full 10 bit meaning monitors will be future proofed going forwards. Also included is a technology dubbed QDEF or Quantum Dot Enhancement Film. What this does is create deeper reds and greens out of blue light emitted by 384 LED zones. This tech was initially used on high end HDR TV sets. What this means is that the colour space is 25% larger than your bog standard sRGB colour space bringing it closer to the DCI-P3 standard that is often used in cinemas.

The mention of 4k 144hz monitors are sure to be of interest to many PC gamers. One can only imagine that it won’t be cheap, considering the current gap between FreeSync and G-SYNC monitors in terms of pricing. With so much new technology packed into one monitor, you may be saving up for a while. The idea of so much great tech in one package is an exciting one nonetheless. Availability is still somewhat unknown with the only official word being “later this year”.

FreeSync 2

The announcement of FreeSync 2 was packed with a lot more technical information than its competitor and as such we know a lot more about what it has to offer over its predecessor. AMD has stated that FreeSync 2 monitors will be available within the first half of 2017. It is thought that rather than a straight up replacement for the original FreeSync, the successor will work more as another more high end option, this means consumers no matter the budget can have access to FreeSync in some form.

G-SYNC was known for its larger operating frequencies and AMD looks to make that an issue of the past as they are focussing on making sure that operating frequencies for FreeSync 2 are much larger across the board. They are doing this by making it a necessity for monitors to meet their LFC or Low Framerate Compensation specifications. What this means is that the maximum refresh rate must be at least 2.5x higher than the minimum refresh rate, so if the minimum was 30Hz the max must be 75Hz. This is a nice idea from AMD, it will mean that FreeSync 2 monitors will all at least have a standard operating range.

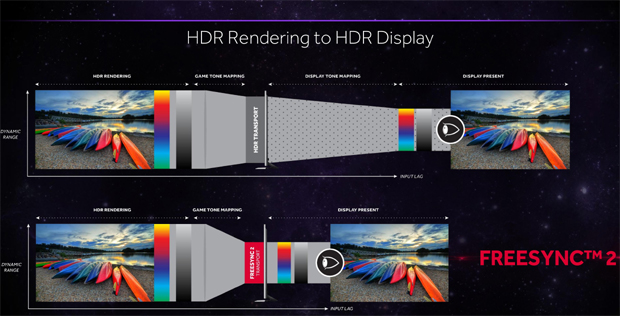

FreeSync 2 also sees the inclusion of full 10 bit HDR and AMD looks to standardise HDR on Windows PC, of which currently is a bit of a minefield. Currently in order to render HDR on a Windows machine it has to happen twice (once through hardware and once through Windows) and FreeSync is looking to change the way that works. By handling all of the HDR processing in hardware, it takes the middleman out of the equation making for a much more efficient pipeline.

Windows operates best currently with the standard sRGB colour space so within FreeSync 2 is the ability for seamless switching of how HDR content is rendered. When HDR content is played, FreeSync 2 will take over, when there isn’t any Windows takes control.

AMD also made it clear that any GPU that currently supports FreeSync will be able to support FreeSync 2, a nice option for those that are running older GPUs. Lately we have seen quite a few technologies be locked behind certain hardware for no reason, so it’s nice for AMD to buck the trend as it so often has.

There hasn’t been any real news on specific monitors but it is known that LG and Samsung will be focussing on the use of FreeSync 2 in their TVs and monitors. Vice president of Consumer IT marketing at Samsung had this to say of their support:

“Samsung has always embraced FreeSync technology, which is why we partnered with AMD from the beginning… We know gamers deserve the absolute best in smooth gaming experiences, and we’re thrilled to see AMD further improving on that technology.”

Overall FreeSync 2 has a lot of facets to it now whereas the first iteration was focussed on just the variable refresh aspect. FreeSync 2 offers a whole suite of features such as FDR and tighter requirements for monitors making sure that ranges for the technology are larger than before meaning you are guaranteed a nice baseline of performance.

Currently FreeSync 2 has it all laid out bare and we are just waiting to see if NVIDIA has anything more to offer over AMD. As it stands, FreeSync 2 looks to be of similar quality for a cheaper price. It isn’t wise to rule out NVIDIA though, as in 2013 when they first revealed G-SYNC they always have something up their sleeve and it will be interesting to see what other new features they can bring to the table.